- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

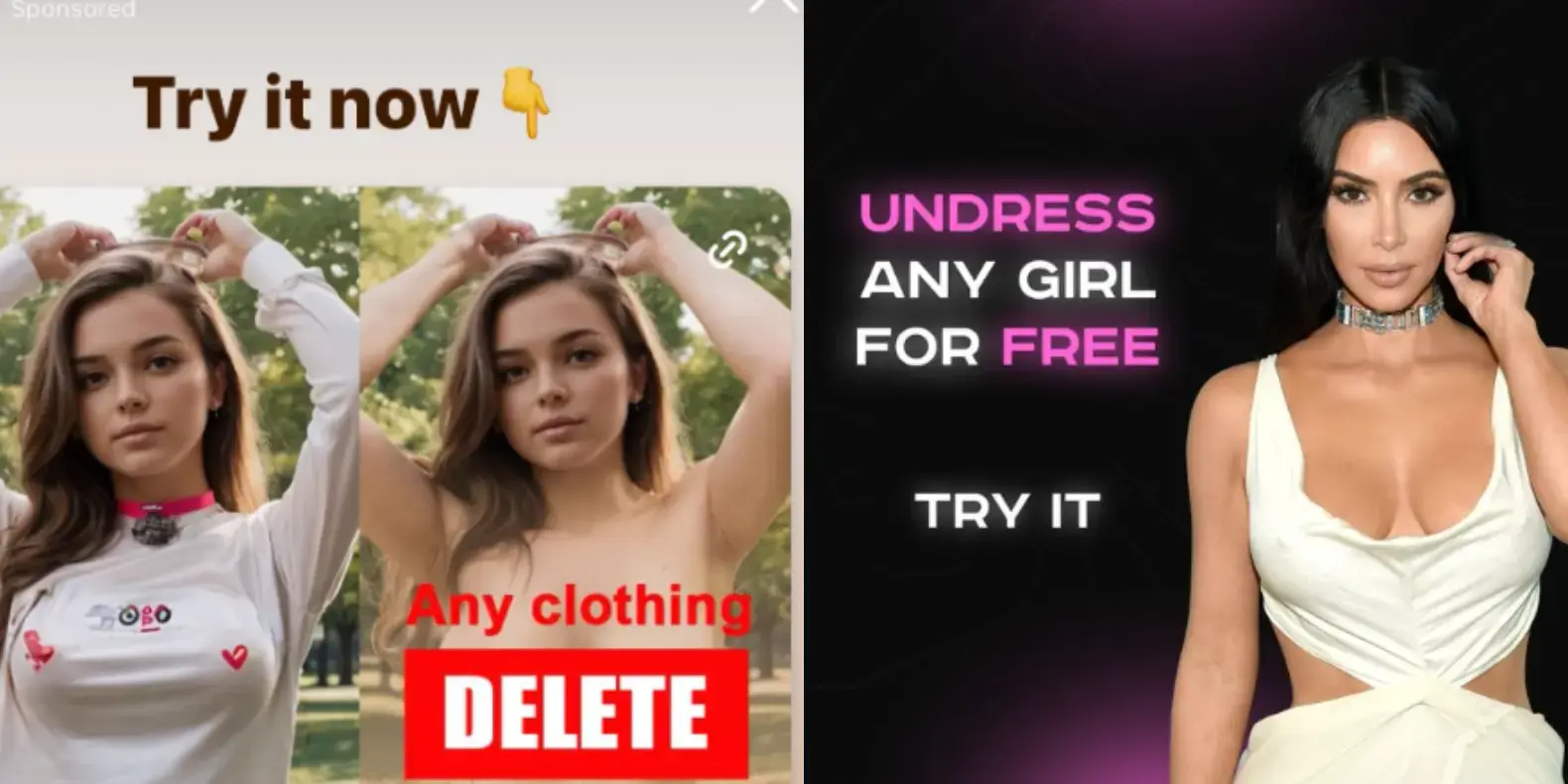

Instagram is profiting from several ads that invite people to create nonconsensual nude images with AI image generation apps, once again showing that some of the most harmful applications of AI tools are not hidden on the dark corners of the internet, but are actively promoted to users by social media companies unable or unwilling to enforce their policies about who can buy ads on their platforms.

While parent company Meta’s Ad Library, which archives ads on its platforms, who paid for them, and where and when they were posted, shows that the company has taken down several of these ads previously, many ads that explicitly invited users to create nudes and some ad buyers were up until I reached out to Meta for comment. Some of these ads were for the best known nonconsensual “undress” or “nudify” services on the internet.

God damn I hate this fucking AI bullshit so god damn much.

Flat earthers on the rise. I can only trust what i see with my eyes, the earth is flat!

How will this affect the courts? How can evidence be trusted?

what

I believe Tim means to say that the spread of misinformation can be linked to the rise of Flat Earthers. That if we can only trust what we see before us, and we see a flat horizon, we can directly interpret this visual to mean that the Earth is flat. Thus, if we cannot trust our own eyes and ears, how can future courtroom evidence be trusted?

“Up to the Twentieth Century, reality was everything humans could touch, smell, see, and hear. Since the initial publication of the chart of the electromagnetic spectrum, humans have learned that what they can touch, smell, see, and hear is less than one-millionth of reality.” -Bucky Fuller

^ basically that

Exactly

Now I know the source of that sample in the Incubus song New Skin. I’ve been curious about that for two decades. Thanks!

What’s the use of autonomy when a button does it all?